Last week the White House launched its new roadmap for digital government. This included the publication of Digital Government: Building a 21st Century Platform to Better Serve the American People (PDF version), the issuing of a Presidential directive and the announcement of White House Innovation Fellows.

In other words, it was a big week for those interested in digital and open government. Having had some time to digest these docs and reflect upon them, below are some thoughts on these announcement and lessons I hope governments and other stakeholders take from it.

First off, the core document – Digital Government: Building a 21st Century Platform to Better Serve the American People – is a must read if you are a public servant thinking about technology or even about program delivery in general. In other words, if your email has a .gov in it or ends in something like .gc.ca you should probably read it. Indeed, I’d put this document right up there with another classic must read, The Power of Information Taskforce Report commissioned by the Cabinet Office in the UK (which if you have not read, you should).

Perhaps most exciting to me is that this is the first time I’ve seen a government document clearly declare something I’ve long advised governments I’ve worked with: data should be a layer in your IT architecture. The problem is nicely summarized on page 9:

Traditionally, the government has architected systems (e.g. databases or applications) for specific uses at specific points in time. The tight coupling of presentation and information has made it difficult to extract the underlying information and adapt to changing internal and external needs.

Oy. Isn’t that the case. Most government data is captured in an application and designed for a single use. For example, say you run the license renewal system. You update your database every time someone wants to renew their license. That makes sense because that is what the system was designed to do. But, maybe you like to get track, in real time, how frequently the database changes, and by who. Whoops. System was designed for that because that wasn’t needed in the original application. Of course, being able to present the data in that second way might be a great way to assess how busy different branches are so you could warn prospective customers about wait times. Now imagine this lost opportunity… and multiply it by a million. Welcome to government IT.

Decoupling data from application is pretty much close to the first think in the report. Here’s my favourite chunk from the report (italics mine, to note extra favourite part).

The Federal Government must fundamentally shift how it thinks about digital information. Rather than thinking primarily about the final presentation—publishing web pages, mobile applications or brochures—an information-centric approach focuses on ensuring our data and content are accurate, available, and secure. We need to treat all content as data—turning any unstructured content into structured data—then ensure all structured data are associated with valid metadata. Providing this information through web APIs helps us architect for interoperability and openness, and makes data assets freely available for use within agencies, between agencies, in the private sector, or by citizens. This approach also supports device-agnostic security and privacy controls, as attributes can be applied directly to the data and monitored through metadata, enabling agencies to focus on securing the data and not the device.

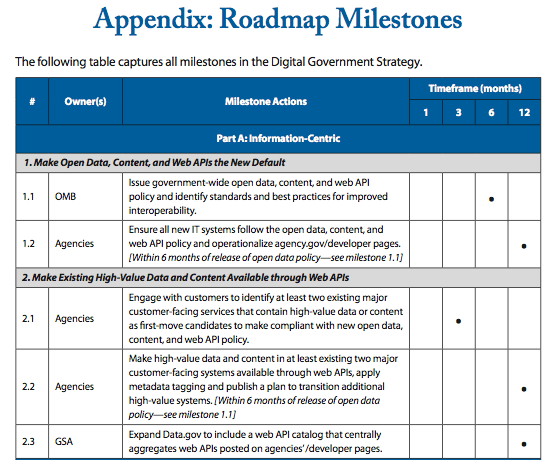

To help, the White House provides a visual guide for this roadmap. I’ve pasted it below. However, I’ve taken the liberty to highlight how most governments try to tackle open data on the right – just so people can see how different the White House’s approach is, and why this is not just an issue of throwing up some new data but a total rethink of how government architects itself online.

There are of course, a bunch of things that flow out of the White House’s approach that are not spelled out in the document. The first and most obvious is once you make data an information layer you have to manage it directly. This means that data starts to be seen and treated as a asset – this means understanding who’s the custodian and establishing a governance structure around it. This is something that, previously, really only libraries and statistical bureaus have really understand (and sometimes not even!).

This is the dirty secret about open data – is that to do it effectively you actually have to start treating data as an asset. For the White House the benefit of taking that view of data is that it saves money. Creating a separate information layer means you don’t have to duplicate it for all the different platforms you have. In addition, it gives you more flexibility in how you present it, meaning the costs of showing information on different devices (say computers vs. mobile phones) should also drop. Cost savings and increased flexibility are the real drivers. Open data becomes an additional benefit. This is something I dive into deeper detail in a blog post from July 2011: It’s the icing, not the cake: key lesson on open data for governments.

Of course, having a cool model is nice and all, but, as like the previous directive on open government, this document has hard requirements designed to force departments to being shifting their IT architecture quickly. So check out this interesting tidbit out of the doc:

While the open data and web API policy will apply to all new systems and underlying data and content developed going forward, OMB will ask agencies to bring existing high-value systems and information into compliance over a period of time—a “look forward, look back” approach To jump-start the transition, agencies will be required to:

- Identify at least two major customer-facing systems that contain high-value data and content;

- Expose this information through web APIs to the appropriate audiences;

- Apply metadata tags in compliance with the new federal guidelines; and

- Publish a plan to transition additional systems as practical

Note the language here. This is again not a “let’s throw some data up there and see what happens” approach. I endorse doing that as well, but here the White House is demanding that departments be strategic about the data sets/APIs they create. Locate a data set that you know people want access to. This is easy to assess. Just look at pageviews, or go over FOIA/ATIP requests and see what is demanded the most. This isn’t rocket science – do what is in most demand first. But you’d be surprised how few governments want to serve up data that is in demand.

Another interesting inference one can make from the report is that its recommendations embrace the possibility of participants outside of government – both for and non-profit – can build services on top of government information and data. Referring back to the chart above see how the Presentation Layer includes both private and public examples? Consequently, a non-profits website dedicated to say… job info veterans could pull live data and information from various Federal Government websites, weave it together and present in a way that is most helpful to the veterans it serves. In other words the opportunity for innovation is fairly significant. This also has two addition repercussions. It means that services the government does not currently offer – at least in a coherent way – could be woven together by others. It also means there may be information and services the government simply never chooses to develop a presentation layer for – it may simply rely on private or non-profit sector actors (or other levels of government) to do that for it. This has interesting political ramifications in that it could allow the government to “retreat” from presenting these services and rely on others. There are definitely circumstances where this would make me uncomfortable, but the solution is not to not architect this system this way, it is to ensure that such programs are funded in a way that ensures government involvement in all aspects – information, platform and presentation.

At this point I want to interject two tangential thoughts.

First, if you are wondering why it is your government is not doing this – be it at the local, state or national level. Here’s a big hint: this is what happens when you make the CIO an executive who reports at the highest level. You’re just never going to get innovation out of your government’s IT department if the CIO reports into the fricking CFO. All that tells me is that IT is a cost centre that should be focused on sustaining itself (e.g. keeping computers on) and that you see IT as having no strategic relevance to government. In the private sector, in the 21st century, this is pretty much the equivalent of committing suicide for most businesses. For governments… making CIO’s report into CFO’s is considered a best practice. I’ve more to say on this. But I’m taking a deep breath and am going to move on.

Second, I love how the document also is so clear on milestones – and nicely visualized as well. It may be my poor memory but I feel like it is rare for me to read a government road map on any issues where the milestones are so clearly laid out.

It’s particularly nice when a government treats its citizens as though they can understand something like this, and aren’t afraid to be held accountable for a plan. I’m not saying that other governments don’t set out milestones (some do, many however do not). But often these deadlines are buried in reams of text. Here is a simply scorecard any citizen can look at. Of course, last time around, after the open government directive was issued immediately after Obama took office, they updated these score cards for each department, highlight if milestones were green, yellow or red, depending on how the department was performing. All in front of the public. Not something I’ve ever seen in my country, that’s for sure.

Of course, the document isn’t perfect. I was initially intrigued to see the report advocates that the government “Shift to an Enterprise-Wide Asset Management and Procurement Model.” Most citizens remain blissfully unaware of just how broken government procurement is. Indeed, I say this dear reader with no idea where you live and who your government is, but I enormously confident your government’s procurement process is totally screwed. And I’m not just talking about when they try to buy fighter planes. I’m talking pretty much all procurement.

Today’s procurement is perfectly designed to serve one group. Big (IT) vendors. The process is so convoluted and so complicated they are really the only ones with the resources to navigate it. The White House document essentially centralizes procurement further. On the one hand this is good, it means the requirements around platforms and data noted in the document can be more readily enforced. Basically the centre is asserting more control at the expense of the departments. And yes, there may be some economies of scale that benefit the government. But the truth is whenever procurement decision get bigger, so to do the stakes, and so to does the process surrounding them. Thus there are a tiny handful of players that can respond to any RFP and real risks that the government ends up in a duopoly (kind of like with defense contractors). There is some wording around open source solutions that helps address some of this, but ultimately, it is hard to see how the recommendations are going to really alter the quagmire that is government procurement.

Of course, these are just some thoughts and comments that struck me and that I hope, those of you still reading, will find helpful. I’ve got thoughts on the White House Innovation Fellows especially given it appears to have been at least in part inspired by the Code for America fellowship program which I have been lucky enough to have been involved with. But I’ll save those for another post.