Even sometimes my home town of Vancouver gets it wrong.

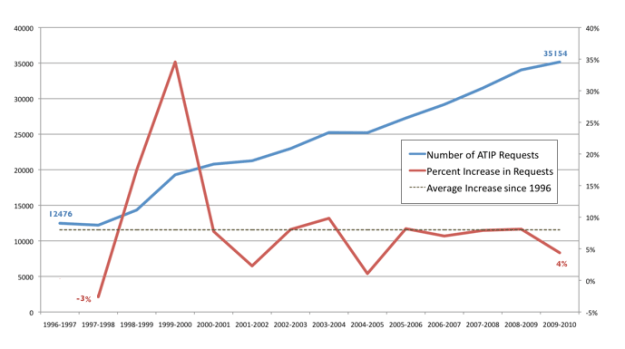

Reading Chad Skelton’s blog (which I frequently regularly and recommend to my fellow Vancouverites) I was reminded of the great work he did creating an interactive visualization of the city’s parking tickets as part of a series around parking in Vancouver. Indeed, it is worth noting that the entire series was powered by data supplied by the city. Sadly, it just wasn’t (and still isn’t) open data. Quite the opposite, it was data that was wrestled, with enormous difficulty, via an FOI (ATIP) request.

In the same blog post Chad recounts how he struggled to get the parking data from the city:

Indeed, the last major FOI request I made to the city was for its parking-ticket data. I had to fight the city tooth and nail to get them to cough up the information in the format I wanted it in (for months their FOI coordinator claimed, falsely, that she couldn’t provide the records in spreadsheet format). Then, when the parking ticket series finally ran, I got an email from the head of parking enforcement. He was wondering how he could get reprints of the series — he thought it was so good he wanted to hand it out to new parking enforcement officers during their training.

What is really frustrating about this paragraph is the last sentence. Obviously the people who find the most value in this analysis and tool are the city staff who manage parking infractions. So here is someone who, for free(!), provides an analysis and some stories that they now use to train new officers and he had to fight to get the data. The city would have been poorer without Chad’s story and analysis. And yet it fought him. Worse, an important player in the civic space (and an open data ally) feels frustrated by the city.

There are of course, other uses I could imagine for this data. I could imagine the data embedded into an application (ideally one like Washington DC’s Park IT DC – which let’s you find parking meters on a map, identify if they are available or not, and see local car crime rates for the area) so that you can access the risk of getting a ticket if you choose not to pay. This feels like the worse case scenario for the city, and frankly, it doesn’t feel that bad and would probably not affect people’s behaviour that much. But there may be other important uses of this data – it may correlate in some interestingly and unpredictably against other events – connections that if made and shared, might actually allow the city to leverage its enforcement officers more efficiently and effectively.

Of course, we won’t know what those could be, since the data isn’t shared, but it is the kind of thing Vancouver should be doing, given the existence of its open data portal. But all government’s should take note. There is a cost to not sharing data. Lost opportunities, lost insights and value, lost allies and networks of people interested in contributing to your success. It’s all our loss.

So while the app is fairly light on features today… I can imagine a future where it becomes significantly more engaging and comprehensive, using open data on the data and city services to show maps of where and how money is spent, as well as post reminders for in person meet ups, tours of facilities, and dial in townhall meetings. The best way to get to these more advanced features is to experiment with getting the lighter features right today. The challenge for Calgary on this front is that it seems to have no plans for sharing much data with the public (that I’ve heard of),

So while the app is fairly light on features today… I can imagine a future where it becomes significantly more engaging and comprehensive, using open data on the data and city services to show maps of where and how money is spent, as well as post reminders for in person meet ups, tours of facilities, and dial in townhall meetings. The best way to get to these more advanced features is to experiment with getting the lighter features right today. The challenge for Calgary on this front is that it seems to have no plans for sharing much data with the public (that I’ve heard of),