Lawyers aren’t generally known to be the most technologically forwarding looking group – but here in Canada they have done one thing really, really well. Making radically efficient the transaction costs around sharing critical information regarding their industry.

CanLII – the non-profit managed by the Federation of Law Societies of Canada has the goal “to make Canadian law accessible for free on the Internet.” In essence CanLII copies all of the materials produced by the courts, organizes it and makes it searchable and re-usable by anyone. For realtors wondering about their future, looking over this service might be a good place to start.

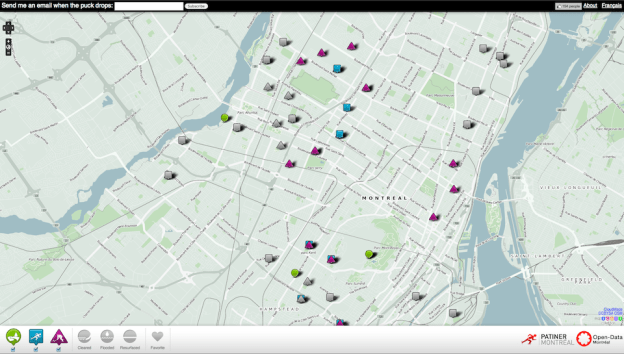

Consider MLS.ca (now rebranded as realtor.ca) the website run by the Canadian Real Estate Association (CREA) that shares information on what homes are for sale where. A few of you may also know that the Competition Bureau and CREA have recently been tangling over access to MLS. While the it is now easier for people to list properties on MLS, the data within MLS is very restricted. Much of the data only realtors can see and re-use of the data appears strictly verboten. These restrictions cause Canadians to suffer from what I like to call the Hulu Syndrome – they can see what a more open system would look like by surfing the various property websites in the United States – but they are stuck using MLS when trying to browse for a home to buy.

Canadian realtors wanting to know what the future looks like for a professional service in a world where data and information is widely available, CanLII offers both a window and a model. Unlike MLS, the great thing about CanLII is that it serves everyone, not just lawyers. It isn’t hard to imagine a world where lawyers insisted that only they can access the cataloging system they pay for (lawyers pay a small annual fee to support CanLII) much like only realtors can access the full database of MLS. In such world if you wanted to read a judgement, or view court documents on a specific case, only a lawyer could access it for you, and then they would interpret it for you, and, to carry the analogy to its logically conclusion, you would rarely or likely never see the original documents.

Thankfully for both the legal system, the market place for legal services and for our democracy, CanLII doesn’t work this way. As mentioned anyone can search, find and download all the information. Indeed, look at CanLII’s Terms of Use:

Subject to the following paragraph and the below conditions pertaining to prohibited use, legal materials published on the CanLII website, such as legislation, regulations and decisions, including editorial enhancements inserted into the documents by CanLII, such as hyperlinks and information in headers and footers, can be copied, printed and distributed by Users free of charge and without any other authorization from CanLII, provided that CanLII is identified as the source of the document.

Compare this to MLS’s terms of use:

This database and all materials on this site are protected by copyright laws and are owned by The Canadian Real Estate Association (CREA) or by the member who has supplied the data. Property listings and other data available on this site are intended for the private, non-commercial use by individuals. Any commercial use of the listings or data in whole or in part, directly or indirectly, is specifically forbidden except with the prior written authority of the owner of the copyright.

(Side note, I’m pretty sure you can’t copyright data – so not sure what the legal rights being exercised here are).

Of course, even though CanLI makes legal documents are freely available, many people still want to use lawyers because they don’t have time or, just as often, realize they need expert advice in this complicated field.

The same would be true of MLS. Many, many buyers will still want to use a realtor, although the buyers and sellers in the market place would be smarter and more informed – but this would probably lead to a better marketplace and happier customers. There are of course, a number of buyers and sellers who will simply freeload off MLS’s data to sell or buy their home on their own (much like some people probably “freeload” off CanLII to represent themselves or do research). But these are probably clients who would prefer to be doing it this way anyway – giving them full access to the database may cause them to a) realize they do need professional help or b) remove customers who don’t really want to use a realtor in the first place and are thus… terrible customers.

This isn’t to say that sharing MLS data won’t be disruptive, I suspect that some people will automate the buying/selling process which a percentage of the market place will prefer to a handheld process – but I suspect that, at some point, this will happen anyway (someone will figure out a model to make it work) at which point CREA and the realtors will have been firmly entrenched in the minds of Canadians as the obstacle to a better, more efficient marketplace, not the leaders who helped foster it.

Lawyers aren’t often known for clarity and simplicity, but clearly when they get it right, they get it right. I hope other professional services will look at what they are up to.