In the UK, the default is open.

Yesterday, the United Kingdom made an announcement that radically reformed how it will manage what will become the government’s most important asset in the 21st century: knowledge & information.

On the National Archives website, the UK Government made public its new license for managing software, documents and data created by the government. The document is both far reaching and forward looking. Indeed, I believe this policy may be the boldest and most progressive step taken by a government since the United States decided that documents created by the US government would directly enter the public domain and not be copyrighted.

In almost every aspect the license, the UK government will manage its “intellectual property” by setting the default to be open and free.

Consider the introduction to the framework:

The UK Government Licensing Framework (UKGLF) provides a policy and legal overview for licensing the re-use of public sector information both in central government and the wider public sector. It sets out best practice, standardises the licensing principles for government information and recommends the use of the UK Open Government Licence (OGL) for public sector information.

The UK Government recognises the importance of public sector information and its social and economic value beyond the purpose for which it was originally created. The public sector therefore needs to ensure that simple licensing processes are in place to enable and encourage civil society, social entrepreneurs and the private sector to re-use this information in order to:

- promote creative and innovative activities, which will deliver social and economic benefits for the UK

- make government more transparent and open in its activities, ensuring that the public are better informed about the work of the government and the public sector

- enable more civic and democratic engagement through social enterprise and voluntary and community activities.

At the heart of the UKGLF is a simple, non-transactional licence – the Open Government Licence – which all public sector bodies can use to make their information available for free re-use on simple, flexible terms.

An just in case you thought that was vague consider these two quotes from the frame work. This one for data:

It is UK Government policy to support the re-use of its information by making it available for re-use under simple licensing terms. As part of this policy most public sector information should be made available for re-use at the marginal cost of production. In effect, this means at zero cost for the re-user, especially where the information is published online. This maximises the social and economic value of the information. The Open Government Licence should be the default licence adopted where information is made available for re-use free of charge.

And this one for software:

- Software which is the original work of public sector employees should use a default licence. The default licence recommended is the Open Government Licence.

- Software developed by public sector employees from open source software may be released under a licence consistent with the open source software.

These statements are unambiguous and a dramatic step in the right direction. Information and software created by governments are, by definition, public assets. Tax dollars have already paid for their collection and/or development and the government has already benefited by using from them. They are also non-rivalrous good. This means, unlike a road, if I use government information, or software, I don’t diminish your ability to use it (in contrast only so many cars can fit on a road, and they wear it down). Indeed with intellectual property quite the opposite is true, by using it I may actually make the knowledge more valuable.

This is, obviously, an exciting development. It has generated a number of thoughts:

1. With this move the UK has further positioned itself at the forefront of the knowledge economy:

By enacting this policy the UK government has just enabled the entire country, and indeed the world, to use its data, knowledge and software to do whatever people would like. In short an enormous resource of intellectual property has just been opened up to be developed, enhanced and re-purposed. This could help lower costs for new software products, diminish the cost of government and help foster more efficient services. This means a great deal of this innovation will be happening in the UK first. This could become a significant strategic advantage in the 21st century economy.

2. Other jurisdictions will finally be persuaded it is “safe” to adopt open licenses for their intellectual property:

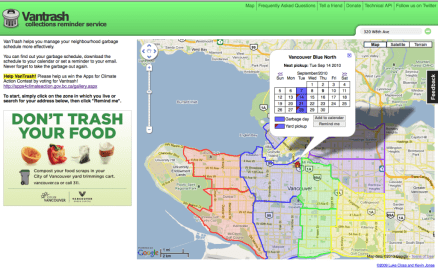

If there is one thing that I’ve learnt dealing with governments it is that, for all the talk of innovation, many governments, and particularly their legal departments, are actually scared to be the first to do something. With the UK taking this bold step I expect a number of other jurisdictions to more vigorously explore this opportunity. (it is worth noting that Vancouver did, as part of the open motion, state the software developed by the city would have an open license applied to it, but the policy work to implement such a change has yet to be announced).

3. This should foster a debate about information as a public asset:

In many jurisdictions there is still the myth that governments can and should charge for data. Britain’s move should provide a powerful example for why these types of policies should be challenged. There is significant research showing that for GIS data for example, money collected from the sale of data simply pays for the money collection system. This is to say nothing of the policy and managerial overhead of choosing to manage intellectual property. Charging for public data has never made financial sense, and has a number of ethical challenges to it (so only the wealthy get to benefit from a publicly derived good?). Hopefully for less progressive governments, the UK’s move will refocus the debate along the right path.

4. It is hard to displace a policy leader once they are established.

The real lesson here is that innovative and forward looking jurisdictions have huge advantages that they are likely to retain. It should come as no surprise that the UK made this move – it was among the first national governments to create an open data portal. By being an early mover it has seen the challenges and opportunities before others and so has been able to build on its success more quickly.

Consider other countries – like Canada – that may wish to catch up. Canada does not even have an open data portal as of yet (although this may soon change). This means that it is now almost 2 years behind the UK in assessing the opportunities and challenges around open data and rethinking intellectual property. These two years cannot be magically or quickly caught up. More importantly, it suggests that some public services have cultures that recognize and foster innovation – especially around key issues in the knowledge economy – while others do not.

Knowledge economies will benefit from governments that make knowledge, information and data more available. Hopefully this will serve as a wake up call to other governments in other jurisdictions. The 21st century knowledge economy is here, and government has a role to play. Best not be caught lagging.

And while we are talking about conferences, I also want to share Open Government Data Camp that will be happening in London, UK on November 18th and 19th. I’m excited to say I’ll be there with our friends from the Open Knowledge Foundation and the Sunlight Foundation, along with numerous others. Harder to get too, but also likely to be quite, quite fun…

And while we are talking about conferences, I also want to share Open Government Data Camp that will be happening in London, UK on November 18th and 19th. I’m excited to say I’ll be there with our friends from the Open Knowledge Foundation and the Sunlight Foundation, along with numerous others. Harder to get too, but also likely to be quite, quite fun…