Okay, before I dive in, a few things.

1) Sorry for the lack of posts last week. Life’s been hectic. Between Code for America, a number of projects and a few articles I’m trying to get through, the blogging slipped. Sorry.

2) I’m presenting on Open Data and Open Government to the Canadian Parliament Access to Information, Privacy and Ethics Committee today – more on that later this week

3) I’m excited about this post

When it comes to opening up government data many of us focus on Governments: we cajole, we pressure, we try to persuade them to open up their data. It’s approach we will continue to have to take for a great deal of the data our tax dollars pay to collect and that government’s continue to not share. There is however another model.

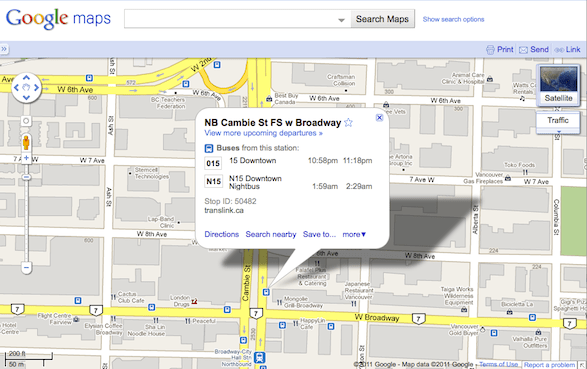

Consider transit data. This data is sought after, intensely useful, and probably the category of data most experimented with by developers. Why is this? Because it has been standardized. Why has it been standardized. Because local government’s (responding to citizen demand) have been desperate to get their transit data integrated with Google Maps (See image).

It turns out, to get your transit data into Google Maps, Google insists that you submit to them the transit data in a single structured format. Something that has come to be known as the General Transit Feed Specification (GTFS). The great thing about the GTFS is that it isn’t just google that can use it. Anyone can play with data converted into the GTFS. Better still, because the data structure us standardized an application someone develops, or analysis they conduct, can be ported to other cities that share their transit data in a GTFS format (like, say, my home town of Vancouver).

In short, what we have here is a powerful model both for creating open data and standardizing this data across thousands of jurisdictions.

So what does this have to do with Yelp! and Health Care Costs?

So what does this have to do with Yelp! and Health Care Costs?

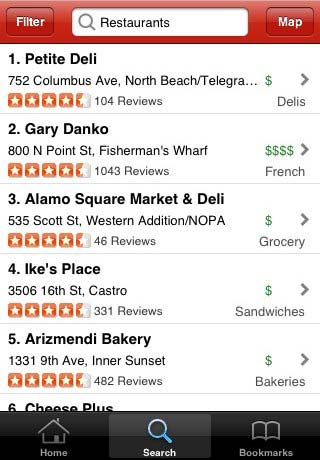

For those not in the know Yelp! is a mobile phone location based rating service. I’m particularly a fan of its restaurant locator: it will show you what is nearby and how it has been rated by other users. Handy stuff.

But think bigger.

Most cities in North America inspect restaurants for health violations. This is important stuff. Restaurants with more violations are more likely to transmit diseases and food born illnesses, give people food poisoning and god knows what else. Sadly, in most cases the results of these tests are posted in the most useless place imaginable. The local authorities website.

I’m willing to wager almost anything that the only time anyone visits a food inspection website is after they have been food poisoned. Why? Because they want to know if the jerks have already been cited.

No one checks these agencies websites before choosing a restaurant. Consequently, one of the biggest benefits of the inspection data – shifting market demand to more sanitary options – is lost. And of course, there is real evidence that shows restaurants will improve their sanitation, and people will discriminate against restaurants that get poor ratings from inspectors, when the data is conveniently available. Indeed, in the book Full Disclosure: The Perils and Promise of Transparency Fung, Graham and Weil noted that after Los Angeles required restaurants to post food inspection results, that “Researchers found significant effects in the form of revenue increases for restaurants with high grades and revenue decreases for C-graded (poorly rated) restaurants.” More importantly, the study Fung, Graham and Weil reference also suggested that making the rating system public positively impacted healthcare costs. Again, after inspection results in Los Angeles were posted on restaurant doors (not on some never visited website), the county experienced a reduction in emergency room visits, the most expensive point of contact in the system. As the study notes these were:

an 18.6 percent decline in 1998 (the first year of program operation), a 4.8 percent decline in 1999, and a 5.4 per- cent decline in 2000. This pattern was not observed in the rest of the state.

This is a stunning result.

So, now imagine that rather than just giving contributor generated reviews of restaurants Yelp! actually shared real food inspection data! Think of the impact this would have on the restaurant industry. Suddenly, everyone with a mobile phone and Yelp! (it’s free) could make an informed decision not just about the quality of a restaurant’s food, but also based on its sanitation. Think of the millions (100s of millions?) that could be saved in the United States alone.

All that needs to happen is for a simple first step, Yelp! needs approach one major city – say a New York, or a San Francisco – and work with them to develop a sensible way to share food inspection data. This is what happened with Google Maps and the GTSF, it all started with one city. Once Yelp! develops the feed, call it something generic, like the General Restaurant Inspection Data Feed (GRIDF) and tell the world you are looking for other cities to share the data in that format. If they do, you promise to include it in your platform. I’m willing to bet anything that once one major city has it, other cities will start to clamber to get their food inspection data shared in the GRIDF format. What makes it better still is that it wouldn’t just be Yelp! that could use the data. Any restaurant review website or phone app could use the data – be it Urban Spoon or the New York Times.

The opportunity here is huge. It’s also a win for everyone: Consumers, Health Insurers, Hospitals, Yelp!, Restaurant Inspection Agencies, even responsible Restaurant Owners. It would also be a huge win for Government as platform and open data. Hey Yelp. Call me if you are interested.